Not only does the event sourcing inherit complexities of the asynchronous world, but it also amplifies them.

In this post, I will discuss what I have learnt from developing and supporting a system built based on event sourcing. I will use an example to share my learning and experiences below, as I believe examples help keep the discussion on track and make it easier to understand as well.

Example Scenario

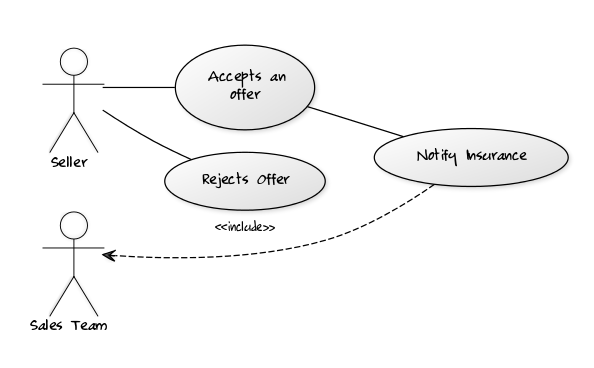

Let’s assume there is a car sales business with a pretty standard website that allows car owners to list their cars and potential buyers to search, enquire and purchase them. Buyers can put online offers which can either be accepted or rejected by the seller, the process is something along these lines:

- The seller lists a car

- The buyer puts an offer

- The seller can accept or reject the offer

Now the business wants to introduce some cross-sales. When an offer is accepted by both parties, there is an opportunity for making an insurance quote to the buyer. If a seller accepts an offer, then an email will be sent to the buyer to inform about the acceptance. Meanwhile, the business wants to inform the insurance sales team to contact the buyer for an insurance quote.

Business sees it as a two-step opportunity:

- Low Priority Sales Lead:

- Auto-generate quote per offer.

- Contact buyer only once for all the offers made.

- High Priority Sales Lead:

- Where the sales team will contact the buyer on the phone

- Contact the buyer for each offer accepted to provide a quote.

Requirements / Constraints

- On offer made, generate a low priority sales lead, only if there is no high or low priority sales lead existing already for the buyer.

- On offer accepted, generate a high priority sales lead and close the low priority sales lead.

- On offer rejected or withdrawn:

- If exists, auto-close the high priority sales lead for the buyer.

- if the buyer has no other offers, auto-close the low priority sales lead.

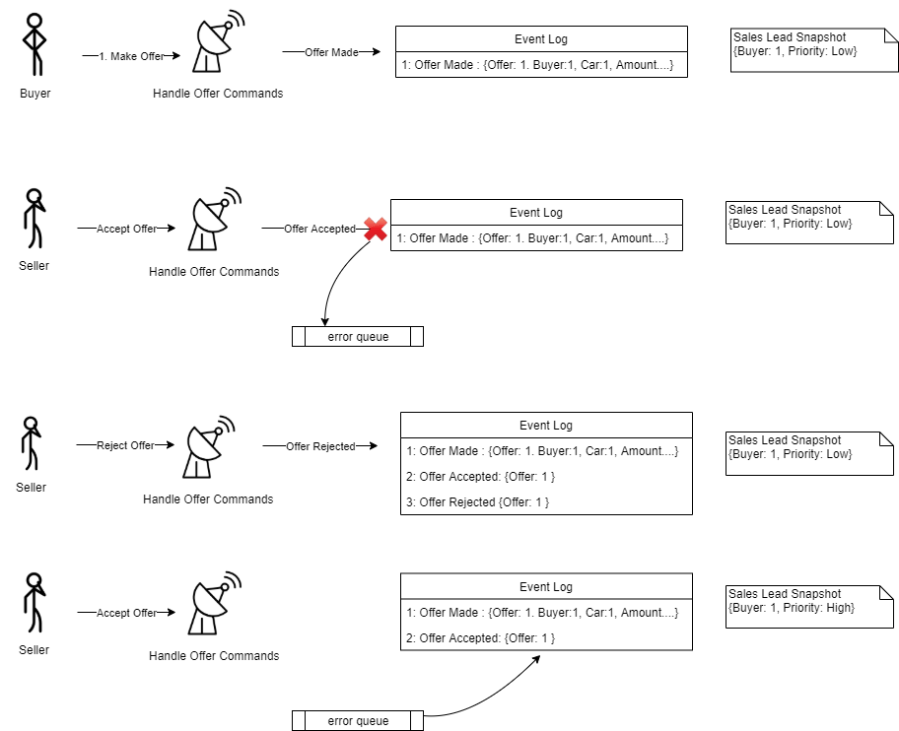

The team implementing this feature decided to use an event sourcing system that will listen to events from the Offer system and generate sales leads. The team agreed to use “Delta” as the event size (more details in fat vs thin events post). In a nutshell, following are the events recognised and their handling:

- Offer Made – Low priority sales lead; auto-generated quote.

- Offer Accepted – High priority sales lead; manually call the buyer.

- Offer Rejected – Close high priority sales lead.

- Offer Withdrawn – Close low/high priority lead if no other unaccepted offers.

Challenges

Following are the key challenges we faced during development and maintenance:

GOD

Guaranteed Order of Delivery is generally a key requirement in most of the event-driven systems, especially if the implementation is event sourcing based. If events are not delivered in the correct order, it could result in a totally different outcome in the systems consuming it. Let’s consider a scenario for our car sales example.

Scenario: Seller quickly rejects an offer right after accepting it:

- The buyer made an offer.

- The seller accepted the offer.

- Seller withdrew the acceptance, quickly after the acceptance.

If the subscriber of these messages will get events in this order then it should result in the following state changes:

| Events for the buyer | Sales Lead Priority |

| Offer Made | Low |

| Offer Accepted | High |

| Acceptance Withdrawn | Low |

God forbids, if GOD didn’t work (I meant by the order of delivery) and we received ‘Offer Accepted’ after acceptance was withdrawn, it could result in a different outcome.

So when we received the event called ‘Acceptance Withdrawn’, our system will look at its state to find an existing High Priority Sales Lead for this buyer, but that does not exist at this stage as Offer Accepted’ isn’t processed yet. So we have the following options here:

- Ignore the event.

- Throw an exception so that someone can fix it manually

- Re-queue the event (it will be covered in the next section: Resilience).

Let’s say if we ignored the acceptance withdrawn event:

| Event for the buyer | Sales Lead Priority |

| Offer Made | Low |

| Acceptance Withdrawn | Low (ignored, so no change) |

| Offer Accepted | High |

As you can see, the sales lead priority would end up as high instead of low, which would be incorrect. It may not be the best time to call the buyer who would be disappointed (by acceptance withdrawn) for an insurance sales call.

Resilience

What can go wrong? This healthy sarcasm becomes food for thoughts. My answer to this is ‘almost anything’. While it is important to cover all the known scenarios of failures, I prefer to spend more energy on how to deal with failure when it occurs. Building some resilience in the system with retry policies can save someone from waking up at odd hours by the alerts.

In our example from the GOD section, we will try to process the message a few times before giving up. Retries can be simple or complex. For the query side of the system, retry policy is a favor you can do to yourself, support team and the actual user. But for commands, where system state changes are involved, retry opens up a can of worms. Retrying the events with side effects can be really complex. In an ideal world, you would expect all the systems to be idempotent; where you can retry the whole handling of an event, but that is a perfect world scenario that rarely exists.

I have discussed some aspects of it and a possible solution in my other post: Event Loves Commands.

Going back to the scenario we discussed in the GOD section, resilience via retry can potentially undo the order of delivery. It would re-queue the event on a transient exception, which may force the events to be processed in an incorrect order. If ‘Offer Accepted’ becomes a victim of the transient error and our system re-queues it then it may be queued after the ‘Acceptance Withdrawn’.

So basically, we will have to ensure that all the retries from this event handling are idempotent; either action within the current domain or in the other domains.

GOD vs GOP:

In the previous example, ignore or re-queue are both valid options. Eventually, it only depends on the business requirements. Let’s assume the business is very conscious about the cost per call so they don’t want to call the sales lead as high priority lead when in fact, it isn’t. This would mean that we have to process the events in the correct order. In this case, re-queue the ‘Acceptance Withdrawn’ if it is delivered before ‘Offer Accepted’ would be a good idea. This sort of validation is easier when events are not too coarse-grained. For example, if we have used a generic event body called ‘Offer Updated’ with a field for current status, it would be more work to validate and channel the event to the proper path. Moreover, generic event bodies gradually start picking information that is either redundant or conflicting in some scenarios. So fine-grained events with “delta” body are my preference in most of the cases.

Stream Id / Partition Id

In event sourcing, the system will log all the events it receives. At run time, all the events are loaded and processed to obtain the current state. When I say all the events, I mean all the events that fall in a particular group. In our example, we will receive events for all the buyers, but our main outcome (sales lead) is in fact per buyer, so we may want to load events only for a given buyer when calculating state. In this case, we should group our events by buyers, which will make buyer id as our stream id.

Choosing a correct stream id is very important. Let’s complicate our example a bit further by adding multiple offers from the same buyer. The buyer made two offers on the two different cars.

| Event for the buyer | Sales Lead Priority | |

| 1 | Offer Made (car 1) | Low |

| 2 | Offer Accepted (car 1) | High |

| 3 | Offer Made (car 2) | Low? |

| 4 | Offer Accepted (car 2) | High |

| 5 | Acceptance Withdrawn (car 1) | Low? |

Any mix up here may result in a ‘very hard to debug scenario’. As shown in the table above, changes in the offers for different cars can potentially interfere with each other because sales lead is per buyer instead of per offer. Does it mean we need sales lead per offer? But we may not really want to contact the buyer for low priority sales on each offer made. So is it still a good idea to use the buyer as our stream id? Maybe, maybe not. It all depends on how we implement it. What we really don’t want is to tightly couple the idea of streams with our model (Sales Lead in this case). We may generate a low priority sales lead per buyer whereas high priority can be per offer.

| Events for a buyer | Low Priority Lead | High Priority Leads | |

| 1 | Offer Made for car1 | True | [ ] |

| 2 | Offer Accepted for car1 | False | [car2] |

| 3 | Offer Made for car2 | True | [car1] |

| 4 | Offer Accepted for car2 | False | [car1, car2] |

| 5 | Acceptance is withdrawn for car1 | False | [car2] |

Or:

| Events for a buyer | Low Priority Lead | High Priority Leads | |

| 1 | Offer Made for car1 | True | [ ] |

| 2 | Offer Made for car2 | True | [ ] |

| 3 | Offer Accepted for car1 | False | [car1] |

| 4 | Offer Accepted for car2 | False | [car1, car2] |

| 5 | Acceptance withdrawn for car1 | False | [car2] |

We get the desired end state in both scenarios. With resilience and streaming per buyer, we will always result in the same state regardless of a glitch in order of delivery.

Snapshots

Snapshots are your friends in the event sourcing system. They save you from recalculating the entire state every time, especially when per stream transaction volume is high. They proved really helpful to me when I migrated a legacy system:

Instead of a big bang migration, we opted for migrating only when a new event is received. During handling an event, if the system doesn’t find any existing state or an initial snapshot for it, the system would talk to the legacy system and build an initial snapshot. This meant the older system took a little bit longer to retire but saved us from a big migration upfront.

Part of the ad-hoc migration, this snapshot generation from an existing system helped us to compare and test the state of the new system with the legacy system. We kept both systems in parallel with the legacy system being the main system. We compared the snapshots of the new system with the snapshot generated from the legacy world to confirm that behaviour in production has not changed. This allowed us to fix and patch the system without any loss to the business. With every patch, we just had to delete all the events and let it migrate on the fly and compare the result with the legacy system.

Side Effects

In the processing of an event, another important part of the handling is differentiating is the state generation with the side effects. When you replay the events to prepare a state, you do not want to trigger side effects. In an event-driven system, side effects would mostly be generating more events. But in some cases, they can be issuing commands such as sending an email. This is different from providing idempotency in case of handling a duplicate event (resiliency).

Conclusion

The event sourcing system has its own challenges and benefits. In most of the cases, it was easy to debug as it provided a complete log of events it processes, but debugging and fixing the challenges mentioned above can be problematic.

Leave a comment